Wikipedia Founder on Building Trust

Brian Lehrer: It's The Brian Lehrer Show on WNYC. Good morning again, everyone, and happy birthday to Wikipedia. The crowdsourced online encyclopedia turns 25 on January 15th. It hits that quarter-of-a-century mark as another political target of the American right, alleging Wikipedia is biased to the left. Some call it Woke-a-pedia. Elon Musk even launched an AI alternative called Grokipedia. Never mind that Grokipedia told a user recently that Musk is more fit than LeBron James. Political division is hardly the only Wikipedia story. It has millions of articles, has logged billions of searches, appears in many languages.

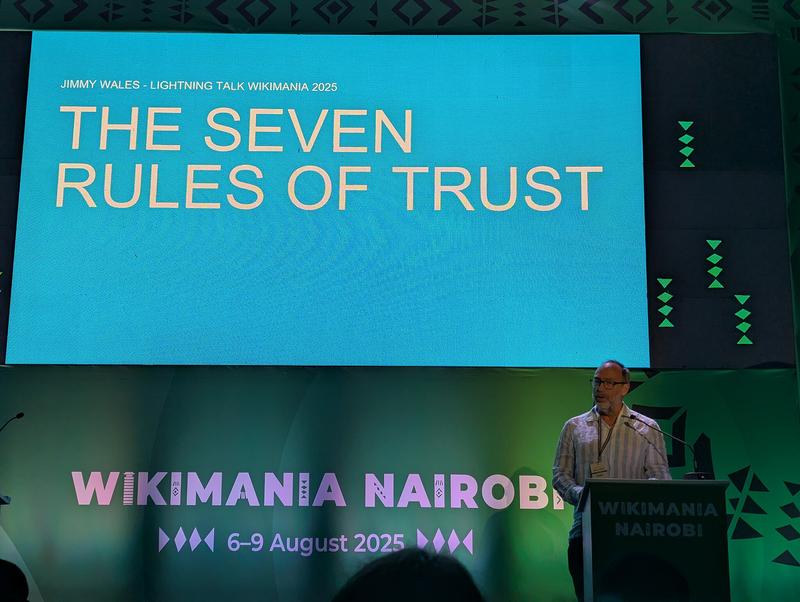

Like us, it's a not-for-profit information source. It has now inspired co-founder Jimmy Wales to write a 25th anniversary book called The Seven Rules of Trust: A Blueprint for Building Things That Last. He sees trusting strangers as being at the core of Wikipedia's success. Way beyond Wikipedia, he addresses head-on the decline in trust that is such a defining feature of our country and our world, and he hopes to contribute to turning that around. Jimmy Wales joins us now. Jimmy, thanks for coming on. Congratulations on 25 years, and welcome back to WNYC.

Jimmy Wales: Great. Well, thanks for having me on. It's an exciting time, and I'm amazed. It's been 25 years. That's a long time.

Brian Lehrer: Let's do a little of the origin story first for our listeners who may not know it. You started Wikipedia 25 years ago, when your newborn baby was very ill. I see. Can you tell that story?

Jimmy Wales: Yes, so I had been working for about a couple of years on a project called Nupedia, which was the same vision to have a free, neutral, high-quality encyclopedia in all the languages of the world. Nupedia wasn't working. It was very top-down. It was very untrusting actually. I was getting very frustrated, and I was near the point of giving up. Then my daughter was born and was very, very ill and in the hospital. It was quite a scary time.

One of the things I did as a frightened first parent is go to the internet to try and learn more about her ailment. It wasn't very good. There were some academic papers, which were too hard for me to understand. There was some random blog posts. I really realized we have to do this. We have to find a way to create a good encyclopedia that will help people in this situation. As a last-ditch thing, one of my employees had shown me the idea of Wiki, which just means a website anyone can edit. I thought, "You know what? We just have to try it." When I got home from the hospital on January 15th, launched Wikipedia. Well, it seems to have worked.

Brian Lehrer: The rest is history, but there's a lot of history there. Let me follow up on-

Jimmy Wales: A lot of history there.

Brian Lehrer: -one aspect of that story, though. You said your original concept, Nupedia, was top-down. As I understand it, in that formation, contributors would have had to show that they were experts in their field. Why did you think allowing anyone to write and edit about things as technical as a serious health condition for a newborn would produce better information than experts in their fields?

Jimmy Wales: Well, at the beginning, it was just like, "Well, we have to do something to get started." If you think about certain types of entries, you realize immediately that there aren't really a need for experts. Here's an article about the history of Paris. Okay. Well, I think anybody, an intelligent person, could start writing some things. I had assumed in the early days that we would have to have an editor-in-chief of the medical section, who would then do all that.

As it turns out, there are different levels of expertise. A lot of people who are experts, medical experts, and so forth, do come. They work in Wikipedia. They edit, and they come contribute their time. Then, other people, lots of Wikipedians have become expert, not necessarily in that particular subject matter, but in writing an encyclopedia, which is also an important skill that I think a lot of people haven't really thought about very much. I didn't know at the beginning. As it turns out, it worked out pretty well.

Brian Lehrer: This was a wisdom of the crowd's premise, or as you put it in the book, people should be able to talk and sort out disagreements. You had to get strangers on the internet to cooperate and trust each other. There's that word, the theme of your book, right? Trusting. Considering the polarized world that we're living in today that you're trying to address, where competing political and culture war camps can't agree on basic facts, never mind how to interpret their meaning, or to trust each other to disagree in good faith. How much do you still believe your premise, and how much do you think it turned out to be too idealistic, at least in some realms?

Jimmy Wales: Well, I still very much believe in the premise. I think when we look at the culture wars and the political world and aspects of social media, it's very easy to get to a very dark view of human beings and of human nature and feel a bit lost and hopeless, but then we can stop and think and remember all the day-to-day interactions and people we meet and people we know. We may disagree with them, but we're able to be civil. We're able to have constructive dialogue. I don't think it's impossible. I think it still is very much possible. Not only possible, but really necessary.

When I looked at the longest-ever government shutdown, which, in my view, was in no small part because the political parties aren't able to trust each other to have a little bit of give-and-take to say, "Look, you give us this, we'll give you that. Next year, we'll take care of you. Let's figure out a compromise." Instead, it's nuclear ultimatums against each other. That's not useful. That's not a practical way to run anything. We do need to get back to thinking about, how do we build things that last? How do we find ways of recognizing the good in others and understanding each other a little bit better? That's really what the book is all about. I'm hopeful.

Brian Lehrer: Listeners, our phones and text message thread are open for your questions and comments and stories for Jimmy Wales, co-founder of Wikipedia, on the occasion of its 25th birthday and author now of The Seven Rules of Trust: A Blueprint for Building Things That Last. 212-433-WNYC, 212-433-9692. We would love to hear from anyone who is active on or who has ever contributed to Wikipedia, yourself or anyone else, with a comment, a story, or a question for Jimmy Wells. 212-433-WNYC, 433-9692. We'll get to the book. I promise. We will tick off all seven rules of trust and talk about some of them.

Continuing on the history and current state of Wikipedia a little bit, who tends to write and edit Wikipedia when it comes to political or culture war topics? I can tell you that hosting a talk show, where we also believe in civil exchange among people who disagree, the people most likely to call in or text, certainly not all, but those most likely who are motivated enough to make the effort tend to be the most opinionated people on any side of an issue, not those who trust anyone on the "other side." How much is that your experience with which members of the public participate in those topics on Wikipedia, and, therefore, how much you and others and leadership have to ride that?

Jimmy Wales: Obviously, if there is a big controversial topic, and particularly if it flares up in the news or whatever, we will see sometimes a flux of incomers, people who come with a war mentality, and "It's a battleground. I'm going to do this," but then they meet up with the regulars, the regular Wikipedians, who, in general care, are far more about Wikipedia, about what we're doing, about the project, than they do about any particular fight.

In fact, one of the techniques that has been used in the past by the arbitration committee is to have a period of moratorium, where they basically ban everybody on both sides who have any kind of an opinion and invite in just good Wikipedians who aren't involved to try and sort out the issue. Now, obviously, that's a pretty extreme move, and that only happens from time to time. Broadly, I think it is really important that we cultivate within Wikipedia a culture and a community that is about, "Okay, let's be fair. Let's be neutral. Let's bring in the accounts of all sides," and recognize that sometimes it's really hard because emotions are high, and there are difficult issues.

It varies over time. Broadly, I would say, the kinds of people who really are into Wikipedia, who are really here year in and year out, are just nerds, not particularly political, really interested in history and science, and all kinds of topics. I think that's really important. Those are the people that we should all elevate and value. That's actually one of the problems with social media, is that those people don't get elevated and valued. Instead, those warriors do.

Brian Lehrer: Let me ask you about one difficult example in that vein. Last night, preparing for this, I read, of all things, the Wikipedia page about your book. One of the things that I learned was that you said on CNN that the Wikipedia page called "Gaza genocide" was, "One of the worst Wikipedia entries I've seen in a long time." I looked, and I saw it was still up, and presented genocide there as a fact, not as a label that's being debated. The entry starts, "The Gaza genocide is the ongoing intentional and systematic destruction of the Palestinian people in the Gaza Strip carried out by Israel during the Gaza War." What, to you, made it a bad entry at the time you spoke to CNN? I'm curious if you've checked it out since.

Jimmy Wales: Yes, so I think for Wikipedia, we have a very, very strong commitment to neutrality. The complaint that I have had, and I've launched a long conversation in the community, and that's still ongoing about this, not just this article about that question of neutrality, "How do we make sure that we don't end up with this kind of a bad result, and what do we do about fixing it?"

Now, what doesn't happen, and this might surprise people, is I don't wade in like an Elon Musk and [chuckles] chop people down and force my point of view through. It's always a dialogue. It's always a discourse. My view is very simple. If you don't have near unanimity in the community over such a very strong statement, then you shouldn't say it in the voice of Wikipedia. You should report very accurately on-- there is some really serious heavy-hitting people and groups of people and bodies who are calling this a genocide.

You can't ignore that. You have to report on that, but you also have to report fairly that this is not a universal view and that, actually, Wikipedia itself should never take a stand on a controversial issue. Like I say, it gets really hard in these cases where emotions are very high. People are very upset about what's been happening in Israel. I know I'm upset both about the original attacks by Hamas and the response.

It's a human tragedy any way you look at it, but that doesn't mean that we should drop our principles and our values. We should really step back and say, "Look, even though this is something people are very upset about, we have to remain really calm, really neutral. We have to report fairly on all sides of the questions, and we should not be taking a side." That's a conversation. I think if you check back in six months or a year, it'll be different. I'm just one person. I'm just me. I'm going to make my case as best I can.

Brian Lehrer: Claudia in Ossining, you're on WNYC with Jimmy Wales, co-founder of Wikipedia. Hi, Claudia.

Claudia: Good morning. Oh, am I excited. Thank you, Mr. Wales. I am a true believer. I couldn't say enough of respect toward Wikipedia. About 10 years ago, I was teaching sustainability at Pace University in Pleasantville in New York. I worked with one of the reference librarians. We developed a whole class, an entire hour-and-a-half class on, how do you use Wikipedia? The students at first were shocked when we suggested it. They said their high school teachers had mixed Wikipedia, which is a riot.

Now, when you think about AI, Wikipedia, it's like so far superior. There's no comparison. What we taught them were the things that most people wouldn't know about, like looking at the top banners. Is it a contested site? Looking at the references at the bottom, looking behind the scenes, at the transparent discussions like you're describing. Then last but not least, I love that map of the flag showing in real time all over the world where people are working on Wikipedia pages. It is such a democratic process. It's incredible. The last thing I would just say, on a personal note, my uncle, who was a Yiddish folk singer and passed away in 1987, I just checked, the last update to that page was in February of this year.

Jimmy Wales: Wow.

Claudia: It's alive, this product. It's beautiful. Thank you, and I love to hear more about what you have to say.

Jimmy Wales: Oh, well, thank you. That's all very kind. I think that question of transparency, which is one of my seven rules of trust, where we say at the top, "The neutrality of this article has been disputed," or "The following section doesn't cite any references." It's a funny thing how that increases trust. You would think telling people that this is not quite right, that would decrease trust, right? No, it actually increases trust because people say, "Oh, okay, that's good." I always joke, "I wish The New York Times would sometimes run a banner at the top saying, 'We had a big fight in the newsroom about this, but we decided to run it. Just be aware. Some of the journalists weren't quite comfortable with it.'"

Brian Lehrer: Here's a text along the same lines as your fan who just called in. Listener writes, "I was quite skeptical about Wikipedia in the beginning. Really doubted how non-experts could arrive at truth or accuracy. That changed in 2012, when my middle schooler began editing the page for an obscure bird and embellished a few things. The Wikipedia staff gently corrected the entry. My child reinserted their original comments, and this went a few rounds until the computers they had used were blocked from editing for quite a while. That completely changed my view of Wikipedia's accuracy, and I now go there first when I'm learning about a new topic." I'll bet you like that one.

Jimmy Wales: Yes, that is good. What's interesting and I like in that story, this detail wasn't in there. Hopefully, when somebody comes in and they do something, and it's often young people and they do a little prank or something like that, of course, hopefully, it gets corrected very quickly. Hopefully, they get a pretty nice message saying, "Hey, don't do that. That's not really what we do here." You don't get blocked right away. You get a little, like, "Oh, hold on. Remember, we're trying to make an encyclopedia."

There's a great story, which is in the book, about Keilana, a very well-known Wikipedian. Emily Temple-Wood is her name. She started out when she was very young by vandalizing Wikipedia. Then somebody was nice to her, and she felt so guilty. She devoted a lot of time to making up for her mistake and doing a lot of good edits to Wikipedia and really headed up a project to add more women scientists to Wikipedia and things like that. There's all these great stories that come out of, assuming good faith and saying, "Okay. Look, yes, you did a prank, whatever. Behave yourself, and come on and join us. It's actually more fun to do something productive."

Brian Lehrer: It's interesting. I was just about to ask you your reaction to people who now call it Woke-a-pedia, but then we got this text, which I thought was going to reinforce that view, but listen to where it goes. The person writes, "This neutrality business. I agree that it's something to strive towards, but it's completely unrealistic to pretend like neutrality actually exists. Neutrality policies often tend to favor more mainstream."

This is where the right goes as well, but this listener writes, "Neutrality policies often tend to favor more mainstream and right-leaning voices and suppress left-wing voices, so it is ironic that the right wing is so up in arms about Wikipedia. Mr. Wales himself--" you can contrast this if you want, I mean, contradict it. "Mr. Wales himself is a known right-leaning techno-libertarian." Your take on yourself, and also what do you think when you see it called by people on the right "Woke-a-pedia"?

Jimmy Wales: Yes. Well, I certainly would say my personal politics are completely irrelevant to almost everything in the world, except the only area I have any expertise in is freedom of expression and internet policy. Yes, no, I think the idea that, okay, if you-- and this has been expressed by the right quite a lot, that if you really focus on mainstream sources and reliable sources, you're going to exclude or negate minority opinions.

It's something to grapple with. Clearly, what you really want to do is to say, "Look, there is a received view, and it's very well-backed by science, for example." If there are dissenting views, they need to be treated fairly and thoughtfully, and they need to be explained, but you wouldn't elevate them for no reason into being the mainstream. When people come to an encyclopedia, they really want to understand, like, "What's the state of knowledge on this today?"

You want to say, "Well, look, here's the main views and here's this and that, and there are these dissenting views, maybe on the left, maybe on the right, and so on." That's really what an encyclopedia should do. Now, if you're writing your own book or you're writing a blog of opinion or whatever, then sure, by all means, pursue your passion and put down what you believe. That isn't really what an encyclopedia should do.

You know what? It's interesting. As it turns out, a lot of people who are themselves pretty ideological often have a really strong capacity to do this, to say, "Look, I'm happy to work with someone who disagrees with me vehemently, as long as we're working together in a polite and friendly way to explain the issue, because I'm quite sure if anybody understood the issue, they would probably side with me." They might both be thinking the same thing to say, "Yes, let's explain the argument fairly, because then people will see."

I think it's people who are less confident in their own beliefs often, but still ideological, are the ones who are terrified to let the other side speak. They really want something that's biased. We're always looking for people who are confident in their beliefs and relaxed and able to say, "Well, look, epistemic humility. I'm willing to listen to the other side. I'm willing to have a good discourse." It should be courteous. It should be comprehensive. I don't think there's any choice but to be neutral because, otherwise, you end up with absolutely intractable problems where you just have to block people who disagree with you. That doesn't really lend you to a good process of seeking the truth.

Brian Lehrer: Yes, and I saw on this critique that you apparently get from both sides about relying on mainstream sources for Wikipedia entries. I see that in your New York Times interview recently, you defended that in part by saying you won't apologize for prioritizing information from mainstream media and quality newspapers and magazines, as you put it.

You told the Times, "We're not about to say, 'Gee, maybe science isn't valid after all. Maybe the COVID vaccine killed half the population.' No, it didn't. That's crazy, and we're not going to print that." That's part of the complexity of what you do, right? We deal with that question, too. You want to maximize openness to multiple points of view. At the same time, you want to say what's true and reject things that are false.

Jimmy Wales: Yes, exactly. There are cases where that's easier and cases where that's harder. I think in the cases where it's harder, the best thing to do is to accurately and fairly summarize all the different points of view and don't take sides.

Brian Lehrer: Let's hear from some Wikipedia contributors. Mano on the Lower East Side, you're on WNYC with Jimmy Wales. Hi, Mano.

Mano: Good morning. I am from Iran, and I was puzzled by some assassinations in Iran, which was clarified for me when I looked up "assassination in Iran" in Wikipedia. Then, when I went back to read again this topic in Wikipedia, it had disappeared. I know this happened a long time ago, but how do things disappear from Wikipedia?

Brian Lehrer: Mano, thank you. I think he told our screener that he had added information and then found that that disappeared. Jimmy, go ahead.

Jimmy Wales: Yes, great. There is a page, I just looked it up, called "List of Iranian assassinations." I don't know how good it is. There's a banner at the top, very transparently saying, "This article has multiple issues." You can go on the talk page and see. One of the main reasons that information gets moved or disappeared is that, is there a quality source? Just coming to write your personal experience isn't really the right way to do it in Wikipedia.

Oftentimes, something that someone wants to put in Wikipedia, they come in. They don't have a really good source, and they don't have good sourcing and so on and so forth. Unless it's encyclopedic and has quality sourcing, then it will tend to go away. Oftentimes, there's just editorial reasons that things get moved around. Maybe that information was added in one place, and then it got moved, and then you couldn't find it later. That's unfortunate. Hard to say without looking into the specific example.

There is a process called "nomination for deletion," and then a deletion debate takes place. One of the things that happens here is it's unfortunate something gets nominated for deletion, which, by the way, any person on the planet can nominate something for deletion, and then a process takes place. Sometimes when that happens and there's a discussion ongoing, then I see people on Twitter going crazy, "Oh, Wikipedia is trying to delete this." It's like, "No, one person nominated it, and there's a discussion." By the way, so far, two hours in, it's 40-1 that, obviously, we're going to keep this. Nothing to get excited about. It's just part of the process.

Brian Lehrer: On a lighter note, I think. Susie in Astoria, you're on WNYC with Jimmy Wales. Hi, Susie.

Susie: Hey there. I'm on a really minor but I've told it's an important thing. I'm a punctuation princess.

Jimmy Wales: [laughs] Right.

Brian Lehrer: Meaning?

Susie: I will just dive into an article, or it'll come up, and I'll just start putting commas everywhere. Sometimes I'm wrong, and somebody gets back to me. I've written one article, and it was on my failed PhD interest.

Jimmy Wales: Oh, that's great. I love that. Are you probably one of those people who would have a pretty strong opinion about the difference between a dash and an em dash, because that's something we fight about quite a lot.

Susie: Do you know, I'm more interested in the Harvard/Oxford comma, because I think that's where people get mad at me a lot?

Jimmy Wales: Oh, that's great.

Susie: The big one. Commas inside or outside of quotation marks.

Jimmy Wales: Oh.

Susie: That's a weird debate. Weird debate.

Jimmy Wales: That's a weird debate. I don't know where you come down. I am not very good, but I am a computer programmer. I tend to want those commas outside the quotation marks, but I know it's not always something people would agree on.

Susie: I want them inside, but I think that you win, actually, in Wikipedia land. For anybody that cares, there are a lot of rules. As you said, anybody can do it. There's meet-ups in New York where they say, "Come out. We'll teach you how to do it." I have to say, the people in the New York Wikipedia scene, I maybe see them once or twice a year. They're so kind and so nice. There's a wiki-picnic every year just to do the social side of Wikipedia.

Jimmy Wales: Yes, that's something most people don't realize is that we do have in-person get-togethers. If you go and meet the Wikipedians, what you'll find is just really nice people, lovely, lovely people, and very nerdy. Whenever I hear Woke-a-pedia, I think, "Oh, you probably think you're going to some sort of communist meeting or something." It's like, "No, no, just some nerds really interested in--" My favorite expression, I've just learned this, I've never heard it in my life, "Punctuation princess." I'm going to carry that with me. I love it.

Brian Lehrer: Caller of the week. Susie, thank you very much. Is Google AI hurting Wikipedia? It seems like Google is now an AI internet encyclopedia itself. Google searches don't just give us links anymore. I'm sure. I don't have to tell you. They often begin with an entry on the topic written by AI. I've read that it's hurting news organizations because fewer people click on the news organization links anymore. They increasingly see the Google AI summary, and that's enough for them. Is Wikipedia being affected in that way?

Jimmy Wales: It's really hard to tell. It's something we're looking into. We did note in a blog post a few months ago, a couple of months ago, that we've seen an 8% decline in human traffic to Wikipedia, and also a corresponding increase in bot traffic, whatever that means. There was a Pew Research study that looked at Google results and found that in traditional search results, the top 10 links, as they've always had, Wikipedia appears about 3% of the time. In AI summaries, Wikipedia appears and is linked to about 6% of the time.

To that extent, and this is very rough and an emerging and changing thing, you would say, "Well, we're getting twice as many links as we used to get from Google, but people click through less often." Maybe about half as often, which would be nothing for our traffic. I think that something in that ballpark is probably correct. If you ask Google a question, well, 20 years ago, Google had no idea they answered any question. It was just a search engine. Now, ask, "How old is Tom Cruise?" Google will tell you, but they also link to Wikipedia there.

Depending on what you're doing, you might just stop there. It's like, "Oh, I got the answer to my question. That's it. I'm done. I'm going to go back to what I was doing." In other cases, maybe you ask your question, and you're like, "Oh, okay, that's from Wikipedia. Oh, actually, I've been thinking, and I wanted to go read more." You go, and you click and read more. Hard to say right now. Certainly, compared to some organizations, we haven't seen a super dramatic impact on our traffic.

There's a tech website, Stack Overflow, where programmers go to ask questions, "Oh, I've got this bug. How do I fix it?" and so on. Their traffic, I read, is maybe 10% of what it was a few years ago because the AI models are really good at answering your tech questions. We haven't faced that kind of catastrophe, but it's hard to say. I think it's a really interesting question. As we see the changing use of the internet, we face the same kinds of questions.

Several years ago, with the rise of mobile, I remember the moment when mobile page views on Wikipedia became more than half of our page views. Of course, if you're on a mobile phone, it's pretty hard to edit Wikipedia, so we thought, "Oh, if everybody's on mobile now, are they going to stop editing Wikipedia?" Well, that didn't happen. They didn't stop editing Wikipedia. These changes are something that they're going to happen. As Wikipedia, we just have to roll with it and figure out, "Okay, what's our place in this ecosystem?"

Brian Lehrer: Okay, the book that you've published for the 25th birthday of Wikipedia, The Seven Rules of Trust: A Blueprint for Building Things That Last. I'm just going to read out the seven. One, make it personal. Two, be positive about people. Three, create a clear purpose. Four, be trusting. Five, be civil. Six, be independent. Seven, be transparent. Now, that sounds like it could be anything from a self-help book for salespeople to relationship advice.

Jimmy Wales: [laughs]

Brian Lehrer: I think you're helping to address polarization in society, so how much is that your goal with the book?

Jimmy Wales: It is big goal. When we look at the Edelman Trust Barometer survey, which has been going on since about 2000, we've seen this enormous decline in trust in journalism, in politics, in business, in each other. This is leading to all kinds of negative effects. I think it's something that we need to address. I think it's really important that we step back and say, "Oh, how do we rebuild a culture of trust? What are the things that we can do in our own lives, in our own organizations? What are the things we should insist upon with our leaders, our political leaders, and businesses, to get back to a culture of trust?" When you live in a world of trust, everything's much easier, and everything's much more pleasant. If you live in a world of distrust, wow, you end up in a really sad and angry place.

Brian Lehrer: How would you begin to apply your seven rules of trust? I'm just going to give you an open mic to go to anything in the book to any specific fields where public trust is declining. The news media, science, the government in general. Want to pick one of those and match it up with one of your seven rules?

Jimmy Wales: Yes, I think a great one is to look at one of the issues I talk about in the book, and this is one of the areas people might be surprised at my view. I thought when The Washington Post decided not to endorse a presidential candidate in the last election, I thought, "Well, very bad timing, doing it just before the election," because that made it seem like some sort of implicit endorsement or a favor for Trump or whatever you might have viewed that as.

Stepping away from the timing of it, I think there's good evidence that when newspapers endorse political candidates, it reduces trust not only with people who disagree, but also with people who agree with that endorsement, because you suddenly feel like, "Okay. Well, I like this endorsement. I agree with it, but is this paper now going to just be shilling for their political candidate?" I think that idea of elevating neutrality or re-elevating neutrality is really important.

The endorsements on the editorial pages is a small piece of that overall thing, but I do think it's really important. As the media has become more partisan over the years, then the trust has fallen. There's lots of reasons for it. We could have a whole hour-long discussion of the challenges of the business model of journalism and how that's been damaged by a lot of different factors. The response to that by becoming more partisan hasn't really helped trust. I think we should really re-examine that.

Brian Lehrer: It's interesting. I guess people who did not like The Washington Post's decision to stop making the endorsements would perhaps even cite some of your seven rules of trust. For example, be transparent. "Well, here's what our editorial board thinks is right, or who they think would be the best candidate," and then they try to pair that with, "Well, the news pages are separate from the editorial pages." Of course, they have to communicate that clearly, but that's your rule number three: Create a clear purpose.

Jimmy Wales: Yes, I think that's right. I think the challenge here is I do think it's completely fine. One of the people I interview very favorable in the book is Tom Friedman, who clearly has views. He puts those views forward, but he makes it very clear that those are his views and not the views of The New York Times. I think that's a fine place to be. I think that the newspaper itself as an institution should really strive to be neutral. In fact, I think it's one of the things that a lot of news organizations should look at their hiring practices and say, "Look, if we have an ideologically monolithic staff of journalists, we're going to have blind spots. We're not going to understand certain stories, and we need to seek out diversity in hiring."

Now, normally, we think about that. We think about minority voices like, "How about people of color?" and things like that. We should also think about, "What about Christians? What about conservatives?" Are we getting the full, rich tapestry of humanity to take part in those discussions in the newsroom to make sure we don't have blind spots? This is a huge challenge, of course. I'm not suggesting it's easy, but I do think it's important.

Brian Lehrer: Do you go to trust in science at all or public health in the book?

Jimmy Wales: Definitely, yes, I think it's really important. One of the things that I talk about there is, at the very, very early stages of the pandemic, the public was told, "Don't bother with masks." Then whatever, a month later, we were told, "Masks are mandatory." That contradiction came about in no small part because the health authorities did not trust the public to take clear and honest information, and not panic and buy up all the masks and cause a shortage. I think they could have been more transparent all along, and that was a big mistake. That cost a lot of trust. There's a lot of elements like that, like when you don't trust the public to be able to handle information.

You skew it in one way. I think a lot of people in the environmental movement have been found to be a bit guilty of this, hyping up the negatives because you feel like, "Oh, you have to scare the public in order to get them to do something, so let's talk only about the negative." No. You know what? Actually, that actually may reduce people's trust when they find out that you've overhyped it and instead to say, "Actually, look, here's some facts, and here's the things that we're not quite sure about and all of that," which a lot of people do, and that is the right way to present science. I think that's really important.

Brian Lehrer: It's funny, those last few answers that you just gave. I don't know how many people would accuse that of being from a Woke-a-pedia.

Jimmy Wales: [laughs] Well, I wouldn't think so. I wouldn't think so. Certainly, I hope that what I stand for and what Wikipedia stands for is serious, thoughtful discourse and dialogue. Depending on where you sit, you may feel, and you may be right, actually, that, "Well, gee, people on the other side are not interested in that," and that is sometimes the case. Certainly, there are areas where the toxicity, for example, on social media, if you go onto X, you can find whole pockets of people who frankly are not the least bit interested in the truth. They're interested in campaigning for their tribe. Yes, okay. Fine, that's problematic. Those aren't the people I'm talking about.

Brian Lehrer: Is that who you're being hit by, you think?

Jimmy Wales: Yes, sometimes. There's no question about that, that people have an agenda. Somebody important has said we're Woke-a-pedia, and so they jump on that bandwagon without actually pausing to take a look and to think and to listen to what we're saying. Yes, hard to say.

Brian Lehrer: Jimmy Wales, co-founder of Wikipedia, which turns 25 on January 15th. He's the author of the brand-new book, The Seven Rules of Trust: A Blueprint for Building Things That Last. Thank you so much for sharing it with us.

Jimmy Wales: Well, Brian, thanks for having me on. It's been a lot of fun.

Copyright © 2025 New York Public Radio. All rights reserved. Visit our website terms of use at www.wnyc.org for further information.

New York Public Radio transcripts are created on a rush deadline, often by contractors. This text may not be in its final form and may be updated or revised in the future. Accuracy and availability may vary. The authoritative record of New York Public Radio’s programming is the audio record.